It is comforting to believe that our behavior is based upon our intentions and chosen beliefs. However, research into implicit bias suggests that this is decidedly not always the case. But what is implicit bias? And why should we be concerned with it?

What is Implicit Bias?

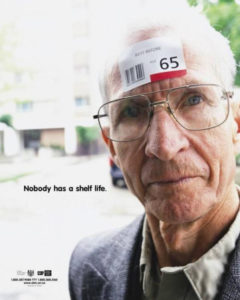

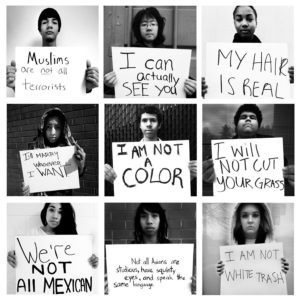

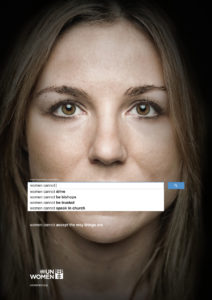

Implicit bias refers to the unconscious or automatic attitudes of an individual that are activated without awareness or intentional control. While implicit bias is part of the foundation of racism and other sorts of negative “isms” (and linked to the activation of our brains amygdala), it is not synonymous with these “isms.” This is an important distinction to make. Implicit biases can affect us in a number of ways, many of which are rather benign (e.g. being swayed by advertising campaigns, following the same path to work, etc.), others of which are quite malignant (i.e., implicit biases that evolve into flat out racism, weightism, sexism, ageism, heightism, etc.). For purposes of clarity:

Implicit bias refers to the unconscious or automatic attitudes of an individual that are activated without awareness or intentional control. While implicit bias is part of the foundation of racism and other sorts of negative “isms” (and linked to the activation of our brains amygdala), it is not synonymous with these “isms.” This is an important distinction to make. Implicit biases can affect us in a number of ways, many of which are rather benign (e.g. being swayed by advertising campaigns, following the same path to work, etc.), others of which are quite malignant (i.e., implicit biases that evolve into flat out racism, weightism, sexism, ageism, heightism, etc.). For purposes of clarity:

- Biases — Associations made in favor of or against one thing, person, or group compared with another, usually in a way considered to be unfair

- Attitudes — Evaluative judgments of an object, a person, or a social group; these can be either positive or negative

- Stereotypes — Associations of a person or a social group with a perceived consistent set of positive or negative traits. Stereotypes come in many flavors: racial, cultural, gender, group/cohort, etc.

- Prejudices — Unfair attitudes toward a social group or member of that group; these can be either positive or negative, though are typically negative

The concept of implicit bias evolved out of psychological research in the 1970’s focusing on two related features of “social cognition,” or how we think about other people and other groups. According to the Kirwan Institute for the Study of Race & Ethnicity:

Some of the defining characteristics of implicit bias include:

- Implicit biases are pervasive. Everyone possesses them, even people with avowed commitments to impartiality such as judges.

- Implicit and explicit biases are related but distinct mental constructs. They are not mutually exclusive and may even reinforce each other.

- The implicit associations we hold do not necessarily align with our declared beliefs or even reflect stances we would explicitly endorse.

- We generally tend to hold implicit biases that favor our own ingroup, though research has shown that we can still hold implicit biases against our ingroup.

- Implicit biases are malleable. Our brains are incredibly complex, and the implicit associations that we have formed can be gradually unlearned through a variety of de-biasing techniques.

Explicit in implicit bias is the emphasis on automatic and unconscious information processing (as opposed to controlled and conscious data processing). Controlled information processing is how we commonly think of our own cognition: an active and deliberate process that demands our attention and energy. Conversely, automatic information processing occurs independent of our will, has nearly unlimited capacity and is difficult to voluntarily suppress. For example, if we are take ‘race’ and ‘violence’ as the subject matter(s):

- Reading an article about violence in policing and the racial disparities among different races’ treatment by the police, and rationally examining the data and drawing conclusions about the criminal justice system or economic system based on this data, is a form of controlled information processing.

- Absorbing grouped images of particular people and violence (for example) by way of grouping together data automatically without active attention to the process through media, is a form of automatic information processing.

Another emphasis advanced by implicit bias researchers is that one’s implicit attitudes often contradict one’s explicit beliefs. Morever, one can (and is often) completely unaware of these biases. Because of this contradiction researchers in the field attempt to measure bias indirectly so that the subject is not aware of what is being measured.

Implicit Bias Tests

The most prevalent form of testing is the Implicit Association Test. Founded by Tony Greenwald (of University of Washington), Mahzarin Banaji (of Harvard University), and Brian Nosek (of University of Virginia), the IAT measures one’s reaction time to particular scenarios by sorting words or pictures into groups as fast as possible. The Implicit Association test is used to detect biases for a wide array of topics, including gender, race, science, career, weight, age, sexuality, and disability. Bias is revealed when one can more quickly and accurately sort when pairings are consistent with certain stereotypes, than when the pairings are inconsistent with certain stereotypes.

The most prevalent form of testing is the Implicit Association Test. Founded by Tony Greenwald (of University of Washington), Mahzarin Banaji (of Harvard University), and Brian Nosek (of University of Virginia), the IAT measures one’s reaction time to particular scenarios by sorting words or pictures into groups as fast as possible. The Implicit Association test is used to detect biases for a wide array of topics, including gender, race, science, career, weight, age, sexuality, and disability. Bias is revealed when one can more quickly and accurately sort when pairings are consistent with certain stereotypes, than when the pairings are inconsistent with certain stereotypes.

Take the Implicit Association Test(s) for Yourself Here!

Implicit Bias & Behavior: Why Should We Be Concerned?

Meta-studies continually conclude that implicit bias is widespread and predictive of behavior. This is concerning. Why? The Stanford Encylopedia of Philosophy summarizes just a few examples of how and why we ought to be concerned with implicit bias and the behaviors it elicits, especially those behaviors that fall on the more benign end of the spectrum (and can give way, when unchecked, to numerous undesirable and morally problematic “isms”), including hiring and health-related discrimination, as well as a tendency to react more or less violently to a person or situation depending on race/ethnicity. As it concerns implicit biases and race:

The stronger one’s associations of good with white faces and bad with black faces on the black-white IAT, the more likely one is to perpetrate hiring discrimination (Bertrand et al. 2005); to “shoot” more unarmed black men in a computer simulation than unarmed white men (Correll et al. 2002; Glaser & Knowles 2008; and to diagnose white patients described in case vignettes with coronary artery disease and prescribe thrombolysis for them compared to black patients described as having equivalent symptoms and electrocardiogram results (Green et al. 2007). Overall, the IAT appears to predict many distinct kinds of behavior, in particular non-verbal and “micro-behavior” (Valian 1998, 2005; Dovidio et al. 2002; Cortina 2008; Cortina et al. 2011; Brennan 2013). – Stanford Encyclopedia of Philosophy

Other Implications: Agency & Responsibility

Because of the automatic and unconscious nature of cognition revealed by studies of implicit bias, both epistemology and moral philosophy are re-evaluating understandings of the reliability and agency of thought, as well as the responsibility we bear for our own cognitive processes.

The debate around bias in epistemology revolves primarily around the following question: Can we know or access cognitive functions that occur without our attention or will? Psychologists Bertram Gawronski, WIlhelm Hofmann, and Christopher J. Wilbur, divide knowledge of automatic cognition into three kinds: source, content, and impact. This essentially translates to the three-part question: can we understand where our attitudes stem from, what our attitudes really are, and the consequences of our attitudes on our behavior?

By examining collected data from implicit bias tests, they argue that people can be aware of the content of their attitudes, but seem to lack awareness of their source or impact. Because of this lack of awareness, we are faced with two philosophical dilemmas:

By examining collected data from implicit bias tests, they argue that people can be aware of the content of their attitudes, but seem to lack awareness of their source or impact. Because of this lack of awareness, we are faced with two philosophical dilemmas:

- Should we adopt a position of extreme skepticism toward what we know?

- If we accept that we do not have control over our attitudes and that our behavior stems from an automatic involuntary cognitive process, how do we assign moral responsibility to these attitudes and behaviors?

The epistemic problem implicit bias presents is more radical than many other forms of philosophical skepticism because it is not just one’s perceptions that must be doubted but one’s rational faculties as well. Where as Descartes’ skepticism is directed at the external world believing that we should distrust sensory data and instead draw conclusions in a purely rational way, implicit bias suggests that our cognitive processes are compromised by automatic processes beyond the supervision of actively focused rationality. And because of this, a moral quandry arises: namely, the increased difficulty of assigning moral responsibility to behaviors that result from autonomous processes.

Can moral responsibility coexist with our cognition if it occurs independent of our will and even our awareness? It is not beyond the scope of reason, though it is a hard one to wrap one’s head around. Thankfully, while research does suggest that implicit bias is an unavoidable feature of our mental faculties, our implicit biases are not beyond intervention. How so? Research suggests that our biases can be mitigated by working to change the underlying associations (of black faces with guns for example) and the effects of these associations on behavior (by recognizing and reversing responses to stereotypical patterns).

Because we are capable of recognizing the associations involved in implicit biases, we are in a better position to correct them, as well as mitigate the effects. Moreover, we can more confidently adhere to and assign moral responsibility for the attitudes and behaviors of ourselves and others (phew!).

To learn more about implicit bias and the material from which the content of this article was inspired, see the following sources:

- Harvard Implicit Association Test(s) – Project Implicit

- Stanford Encylopedia of Philosophy

- Kirwan Institute for the Study of Race and Ethnicity

- New York Times – “In Bias Tests, Shades of Gray”

- Psychology Today – “Overcoming Implicit Bias and Racial Anxiety”

- Psychology Today – “Studies of Unconscious Bias: Racism Not Always By Racists”

- California Law Review, vol. 94: 4 “Implicit Bias: Scientific Foundations” (2006)

- Journal of General Internal Medicine, vol. 28:11, “Physicians and Implicit Bias: How Doctors May Unwittingly Perpetuate Health Care Disparities” (2013)

- Consciousness and Cognition, vol. 15 “Are ‘Implicit’ Attitudes Unconscious?” (2006)